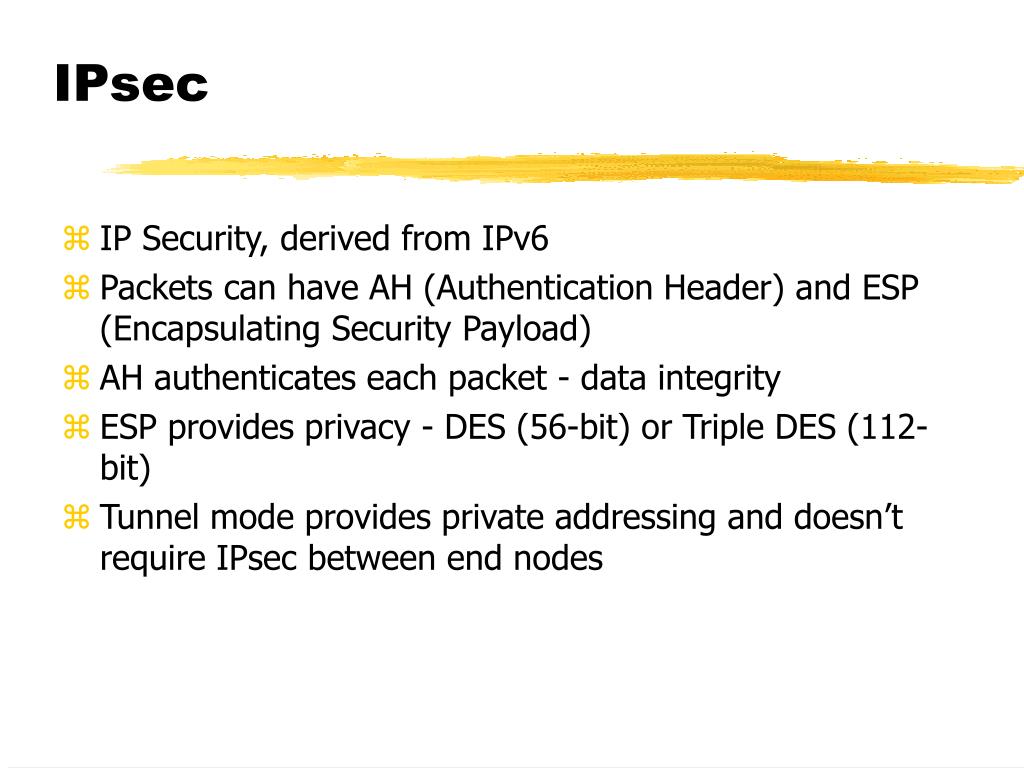

As providers of business VPN solutions, we focus on comparing the protocols specifically for VPN use within business environments. IPsec and WireGuard are both commonly used VPN protocols. It does not rely upon a dedicated protocol for tunneling. WireGuard offers VPN functionality by encapsulating TCP, UDP, and other IP traffic inside UDP packets with encrypted content. WireGuard is free and open-source, and WireGuard implementations are available for major operating systems. WireGuard is a modern VPN protocol that is simple to use and easy to implement on both new and existing networks. IPsec is supported on many operating systems and device types, including embedded devices and network routers.

IPsec is frequently used as the secure communication protocol for business VPNs, most commonly with a tunneling protocol like L2TP. IPsec is a network protocol used for the encryption of IP traffic. Finally, we provide guidance on which might better suit your business VPN use case. We look at both from the standpoints of security, user experience, and platform availability. In this article, we compare IPsec and WireGuard, two protocols used in VPNs which allow businesses to connect remote networks. VPNs are often the preferred way to allow you and your teammates to access private infrastructure like Kubernetes clusters and file servers, and your ideal solution needs to be secure, easy to use, and easy to administer. Just after that, we check if the dialResp is recognized or not.If you are tasked with selecting a VPN (Virtual Private Network) solution for your team or company, chances are high that you’ve looked into both IPsec-based and WireGuard-based VPNs as potential options. You are printing you receive the response before checking if it is a valid response or not. + fmt.Println("DEBUG - Receive PacketType_DIAL_RSP for connection ID=", resp.ConnectID) This also fixes the issue of leaking file descriptors in kubernetes-sigs#276 PacketType_DATA, for those connections, we close the stream once the There are also those connections which are stuck by never receiving the Proxy-server forwards the CLOSE_RSP back to the apiserver. Upon receiving the simulated CLOSE_REQ, proxy-server follows theĬodepath of the successful lifecyle of the packet i.e forwards theĬLOSE_REQ to the agent, to which the agent responds with CLOSE_RSP and In order to to simulate the behaviour of apiserver sending theĬLOSE_REQ, the proxy-server fires up a goroutine and tracks inactiveĬonnections, upon finding so, itself sends a CLOSE_REQ to the sameĬhannel on which it is listening from the api-server. With a CLOSE_RSP but for idle connections this never happens. Lifecycle, the apiserver sends a CLOSE_REQ and proxy-server responds The idea behind this approach is that in a sucessful connection We close the stream thereby releasing the resources. Stream after a specified timeout (configured via -grpc-max-idle-time), Tracks all the connetions per stream and if there is no activity in the In order to mitigate the issue, we create a separate goroutine, that It is noticed that the memory consumption of Konnectivity server, This commit builds on top of the previous commit.

All of the connection ids, that are printed for this case if cross-checked against the proxy-server logs have only the following logs snippet, example connectionID=505 We are interested in the case of Both missing from $connection_id. If ] thenĮcho "CloseResponse missing for $connection_id"Įcho "Interesting case for $connection_id" Rg "$close_rsp\$" /tmp/kube-apiserver.log > /dev/null || res_status=1 Rg "$close_req\$" /tmp/kube-apiserver.log > /dev/null || req_status=1 requestheader-allowed-names=system:auth-proxy \ + # The other supported transport is "tcp". + # Konnectivity server to listen on the same UDS socket. + # locates on the same machine as the API Server. UDS is recommended if the Konnectivity server

+ # This controls what transport the API Server uses to communicate with the + # Konnectivity server to work in the same mode. + # end user visible difference between the two modes. Supported values are "GRPC" and "HTTPConnect". + # This controls the protocol between the API Server and the Konnectivity Diff -git a/hack/local-up-cluster.sh b/hack/local-up-cluster.sh

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed